Date: 2026-03-15

Author: Ravi Natarajan

Motivation

Modern AI systems increasingly rely on multiple models with different strengths, limitations, costs, latency profiles, and governance constraints.

A single model is often not the best choice for every task.

Some requests may be better handled by a smaller and faster model, while others may require a stronger reasoning model, a domain-specific model, or a provider approved for enterprise use.

Hardcoding these decisions inside every agent or workflow creates tight coupling and reduces flexibility.

K9-AIF introduces the concept of a Model Router to address this problem in a structured and extensible way.

What is a Model Router?

A Model Router is a capability that selects the most appropriate model or inference provider for a given inference request.

Its responsibility is not to perform the task itself, but to decide which model should handle the request.

This allows the rest of the system to request inference through a stable abstraction while the actual model choice is determined by routing policy.

Architectural Context

Within K9-AIF, the Model Router belongs to the inference layer.

It exists to support model selection without forcing agents, orchestrators, or other runtime components to depend directly on specific model providers.

This creates a cleaner separation between:

- task execution logic

- model/provider selection logic

The result is a more modular and portable architecture.

Why It Matters

Without a model router, model choices are often embedded directly inside code.

That may work for small experiments, but it quickly becomes difficult to manage in real systems where teams need to balance:

- quality

- latency

- cost

- provider availability

- governance controls

- deployment environment

- operational fallback requirements

A model router centralizes those concerns and makes them manageable.

Design Goals

The K9 Model Router aims to support:

- provider independence

- model abstraction

- cost-aware selection

- latency-aware selection

- quality-aware routing

- policy-driven routing

- governance-aligned controls

- extensible routing strategies

These goals are important because model choice is not only a technical decision — it is often an architectural and operational decision.

Default Router

K9-AIF can provide a simple default model router capable of selecting models using straightforward rules or configuration.

A default implementation may consider factors such as:

- task type

- configured provider priority

- model class or model size

- environment or deployment context

- enterprise allow-list / deny-list rules

This provides a practical baseline while keeping the design open for future extension.

Advanced Routing

More advanced routers may implement richer strategies such as:

- quality-based selection

- benchmark-informed routing

- cost / latency / quality trade-offs

- provider fallback and failover

- dynamic policy evaluation

- adaptive model selection over time

These more advanced implementations can be introduced without changing the rest of the application architecture, because the routing concern remains isolated.

This is one of the main architectural benefits of the approach.

Why K9-AIF Treats This as an Architectural Concern

In many AI projects, model selection is treated as an implementation detail.

K9-AIF treats it as a first-class architectural concern.

That is important because the chosen model affects not only response quality, but also:

- operational cost

- performance

- reliability

- compliance posture

- deployment flexibility

- long-term maintainability

By isolating model selection behind a dedicated routing capability, K9-AIF makes this concern explicit and governable.

Extensibility

The Model Router is designed to be extensible.

Organizations may begin with a simple default router and later replace or extend it with more sophisticated strategies as their needs evolve.

This makes the capability suitable for both:

- lightweight local experimentation

- enterprise-grade production systems

More advanced implementations may also be packaged as reusable Solution Building Blocks (SBBs).

Persistence Support

The K9 Model Router can optionally persist runtime routing state.

This includes:

- session records

- user turns

- routing decisions

- model affinity

This capability allows the router to evolve from simple one-shot selection into a session-aware routing component.

For example, future routing decisions may consider prior interactions, model affinity, or context summarization.

By default, K9-AIF now provides SQLite-based persistence out of the box, allowing the Model Router to work immediately without requiring any external database setup.

For enterprise deployments, the router can be configured to use PostgreSQL.

Persistence backend selection is controlled through config.yaml.

A simple smoke test script (./test_model_router.sh) is included to verify the router and end-to-end inference flow.

Conclusion

The K9 Model Router helps separate inference access from model/provider decision-making.

This separation improves portability, maintainability, governance, and future flexibility.

Rather than embedding model choices across the system, K9-AIF provides a cleaner architectural approach for managing inference in a structured and extensible way.

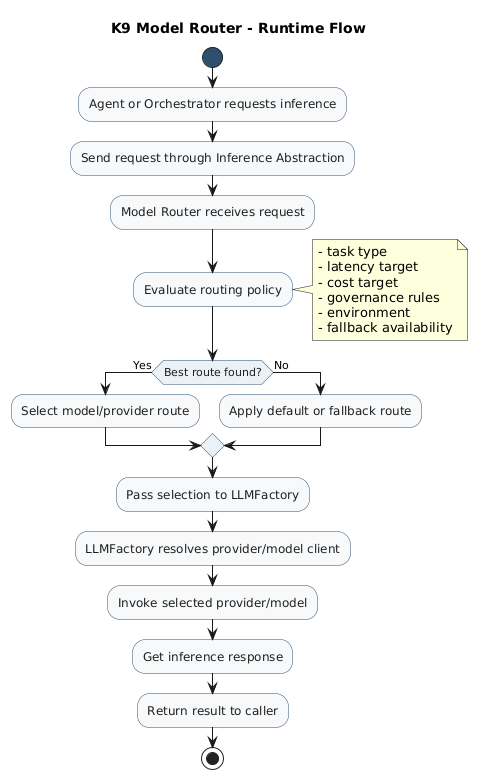

K9 Model Router in K9-AIF

The following diagram shows how the Model Router fits within the

K9-AIF inference layer and interacts with LLMFactory and providers.

Learn More

K9-AIF is an architecture-first framework for modular and governed agentic AI systems.

More at: